Mustafa Suleyman's Calculator

Suleyman sees walls to hurdle. I see warning signs.

Mustafa Suleyman’s opinion piece in MIT Technology Review argues that AI development won’t hit a wall anytime soon. He points to the ever-increasing speed of AI as the achievement of our age. Then he picks a most unfortunate analogy for AI to make his case.

Suleyman’s AI vision hurdles past the skeptics’ walls because it can, and he sees that as proof of progress. I see those walls as warning signs; ones he should slow down and read.

The 2026 Stanford AI Index report runs hundreds of pages. Model after model, metric after metric. The collective grade on accuracy: C-student at best. Many fail outright.

Benchmark | Top Model | Score

Language Understanding (p. 81) | Gemini 3.1 Pro | 91.2%

Multimodal Reasoning (p. 94) | Gemini 3.1 Pro Preview | 88.21%

Computer Tasks across OS (p. 113) | Claude Opus 4.5 | 66.30%

Real-World Software Issues (p. 100) | Claude 4.5 Opus | 76.80%

Vibe Coding Bench (p. 102) | Claude 4.6 Opus | 56.57%

Yes, answers arrive faster. An improbability drive achieving ludicrous speed is not something to celebrate measuring. Speed is not a metric we should value. Nor should Suleyman.

Trust is the only Calculation that matters

To make his case, Suleyman reaches for a calculator.

Think of AI training as a room full of people working calculators. For years, adding computational power meant adding more people with calculators to that room. Much of the time those workers sat idle, drumming their fingers on desks, waiting for the numbers to come through for their next calculation. Every pause was wasted potential. Today’s revolution goes beyond more and better calculators (although it delivers those); it is actually about ensuring that all those calculators never stop, and that they work together as one.

Somehow, more calculators running faster is an improvement. Suleyman blows past what a calculator actually is.

My Texas Instruments scientific calculator never gave me a wrong answer. Given a valid input, it returned an objectively verifiable result. If the answer was wrong, the error was in my formula. My teachers taught me to check my work, not its work. It never invented an answer to a problem I hadn’t written down first. It never told me the value of pi was 4. It never gave three different answers to the same question.

In the age of AI, I am teaching my daughter to distrust answers from a machine.

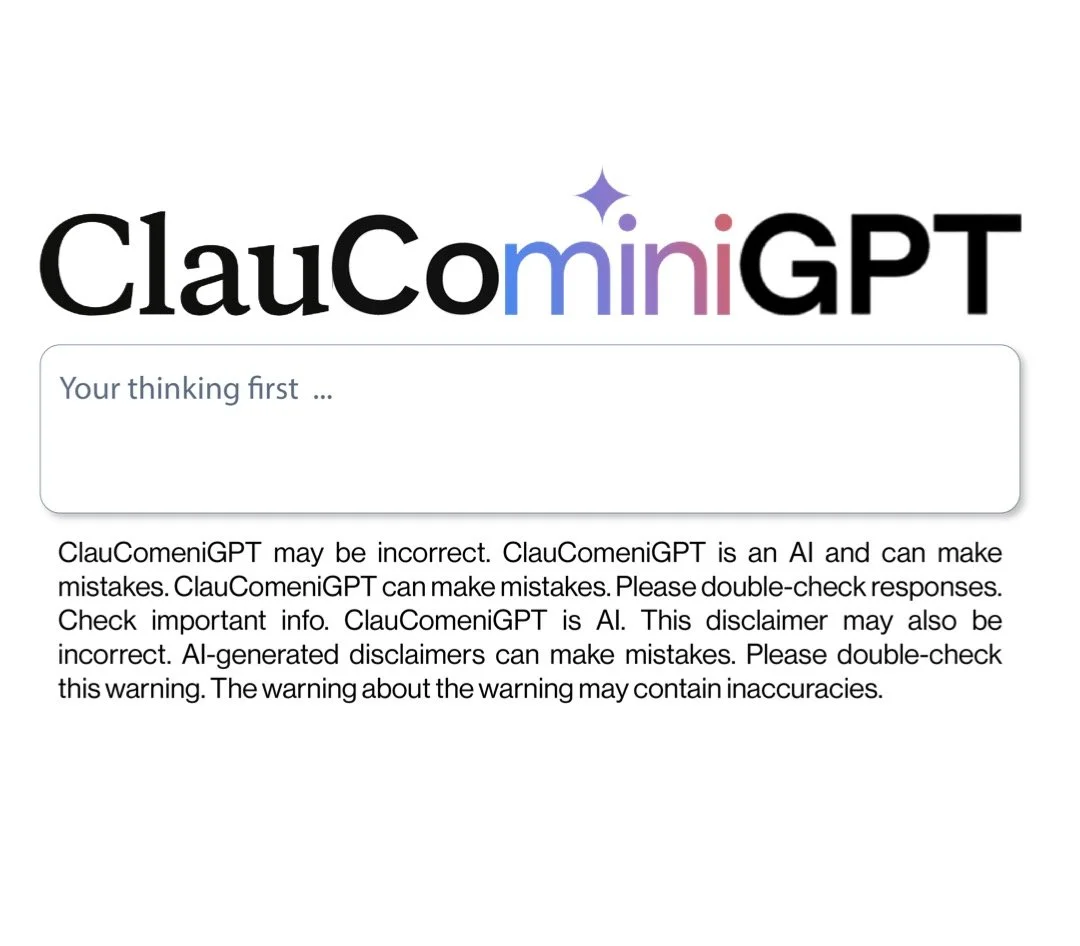

That distrust has nothing to do with the speed of the response. It is what the product tells you about itself. No technology in the computer age has been marketed as a partner to human work while shipping with a permanent disclaimer that its output may be wrong. "AI-generated content may be incorrect." That sentence sits under every answer the most hyped technology of the decade generates.

The compendium of disclaimers across all platforms warns that the accuracy isn’t there yet.

Consider this future Suleyman points to:

What does all this get us? I believe it will drive the transition from chatbots to nearly human-level agents—semiautonomous systems capable of writing code for days, carrying out weeks- and months-long projects, making calls, negotiating contracts, managing logistics. Forget basic assistants that answer questions. Think teams of AI workers that deliberate, collaborate, and execute.

Now imagine Suleyman’s future at 70%, 80%, even 90% accuracy. Suleyman’s argument about speed is about as useful as Texas Instruments claiming the first natural language processor because the calculator could spell ShELLOIL, or the first smartphone because it could text hELL.O. (If you are over 50, you already know: 71077345 and 0.7734, upside down.)

Accuracy is the metric. It defined the calculator. Those calculators, and the computers that followed, powered the research that now bears fruit as AI. They got here by being accurate. We got here by being trusting them. Not by being fast.

A Better Use For His Calculator

AI tools are a great assistant when used as a check on human thought, not as a substitute for it. Suleyman could have run his own analogy past Copilot. I did.

Response from Copilot:“Comparing AI to a scientific calculator: Overstates reliability. Undermines critical thinking. Encourages misuse and over-automation. Treating those as equivalent is not just inaccurate—it’s operationally dangerous.”Microsoft’s own AI knows the analogy is deeply flawed.

This is AI used as a check on human thought. Speed is not the metric. Accuracy is. Intellectual honesty is. Authentic human expression is.

— Mike O’Brien

Addendum:

The second unfortunate analogy from the same article:“We evolved for a linear world. If you walk for an hour, you cover a certain distance. Walk for two hours and you cover double that distance. This intuition served us well on the savannah.But it catastrophically fails when confronting AI and the core exponential trends at its heart.”

Envision AI on the savannah:

Human Prompt: Where are you racing to? Is that a lion up ahead?

AI Response: Yes, but think of it as a big cat.Texas Instruments Calculator Image credit.

Ray Reyes (@rareyesphoto) Unsplash